Azure Stream Analytics can used to stream data to different targets and to Power BI. In this post, I will show how to stream data to a Power BI dataset from a Blob storage. Normally you will get at this scenario data from IoT devices or something which push very frequently data, but to make this post a little bit easier for me for you, when you want to reproduce something of this, it can be created with resources which are normally available. Okay, let us start to build up the environment.

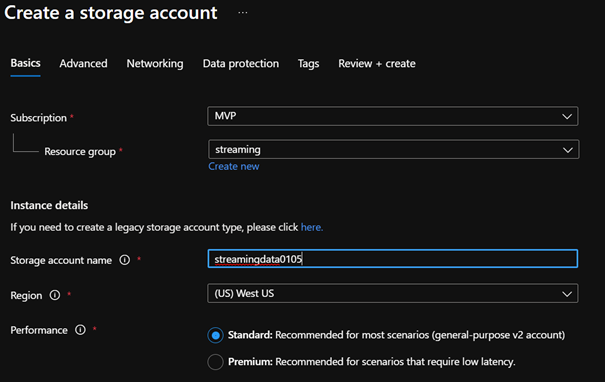

First, I create a storage account. Please make sure, that you create this account in an area, where you also want to create the Stream Analytics. This is important to make the setup easier and to save money and minimize the latency.

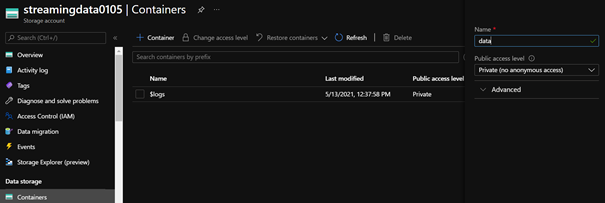

Next, we need our data intake container where we store the data which will imported into out Power BI environment.

To this container I upload a simple CSV fileCategory;Date;OrderId;Amount

Trucks;9/5/2021;763;2289

Imagine that your store will send you for ever sale a file which should be immediately available in your Power BI report.

Next, we need our Stream Analytics Job, you will find this resource at the Azure marketplace:

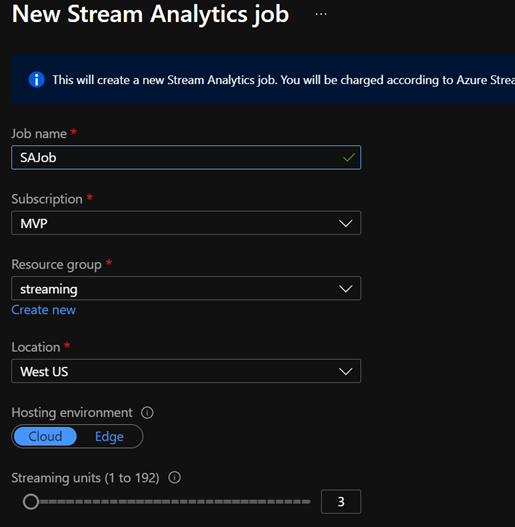

Please ensure that you create this job at the same region as you created the storage account. By creating this job, I leave everything by default. The Units are by default at 3 and can scaled up by need and after creating this resource.

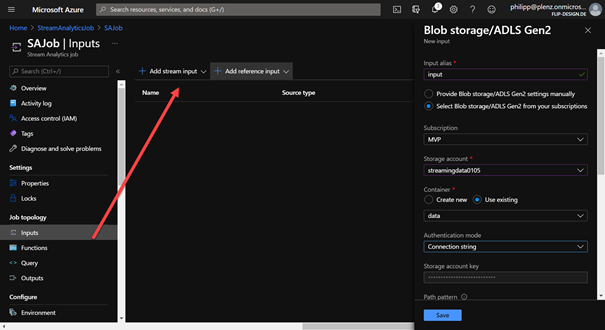

After a successful deployment we need to configure the data in-take. You can configure this by navigating zo input and select “new” and Blob storage. For the Authentication mode I select “connection string”. By selecting this, maybe you need to provide a key, this key can be grab from your storage account under access keys. Please make also sure, that you configure the data format to CSV with semicolon as a delimiter.

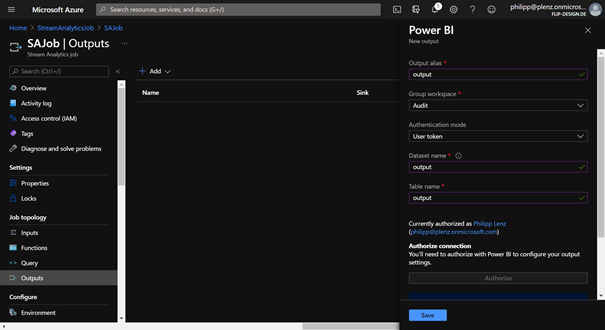

Now we need our output. For this I created at my Power BI tenant only a new workspace. You can use an existing, but to get a better overview for myself, I created a new one. By selecting outputs, I created a new with Power BI.

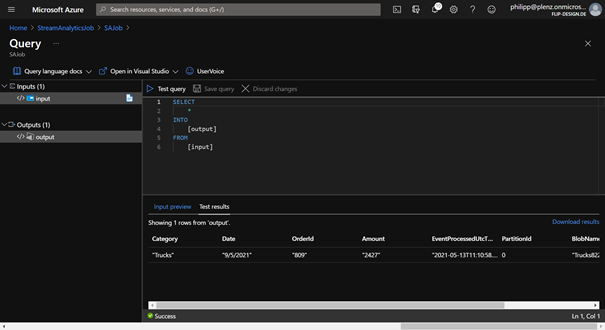

By going back to the Overview, we can edit out query which selecting our data from the input and push the data to the output.

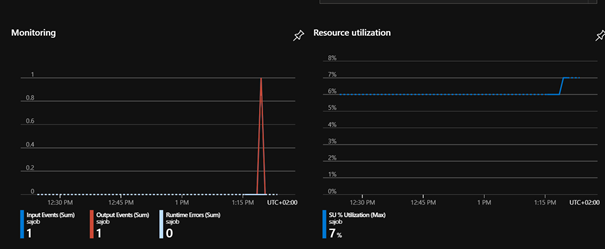

Now everything is ready, and we can start the job. After that, and we provide new data, we get a new dataset in our Power Bi workspace where we get our data from the blob storage. To provide new data, you can easily upload a new file. You can check this at the overview page.

By creating a new report at Power BI based on the new dataset, you can check the data. The dataset contains additionally some information about the data file and the import process. I think it makes more fun when you automatically get new data, for this approach I created a PowerShell script. You need by configuring this script also the access key from your blob storage. After that, you can run this script in a loop.

$accesskey = "f1vxAsiHHWvdEF8lfAExLq66rghWfqzXZoR3ugq9p5qnqDTnIt4DAUvz4luHEJ5w1Gbcg+yy6jT+EEvHJGaXtA=="

$StorageAccountName = "streamingdata0105"

$containerName = "data"

$date = Get-Date

$category = "Trucks"

$amount = $date.Millisecond

$filename = $category + $date.Hour + $date.Minute + $date.Second + ".csv"

$path = "c:\temp\files\"

$tempfilepath = "c:\temp\files\" + $filename

$output = @()

$output = @("Category;Date;OrderId;Amount")

$output += @($category + ";" + $date.Day + "/" + $date.Month + "/" + $date.Year + ";" + $amount + ";" + $amount * 3)

$output | Out-File -FilePath $tempfilepath

Connect-AzAccount

$StorageContext = New-AzStorageContext -StorageAccountName $StorageAccountName -StorageAccountKey $accesskey

Set-AzStorageBlobContent -File $tempfilepath -Container $containerName -Blob $filename -Context $StorageContext